Texture BakingA process in which scene lighting is "baked" into a texture map based on an object's UV texture coordinates. The resulting texture can then be mapped back onto the surface to create realistic lighting in a real-time rendering environment. This technique is frequently used in game engines and virtual reality for creating realistic environments with minimal rendering overhead. is a process in which scene lighting is "baked" into a texture map based on an object's UV texture coordinates. The resulting texture can then be mapped back onto the surface to create realistic lighting in a real-time rendering environment. This technique is frequently used in game engines and virtual reality for creating realistic environments.

In Octane, texture baking is implemented as a special type of camera known as a "Baking" camera, which, in contrast to to the thin lens and panoramic cameras, has one position and direction per sample. The way these are calculated depends on the input UV geometry and the actual geometry being baked.

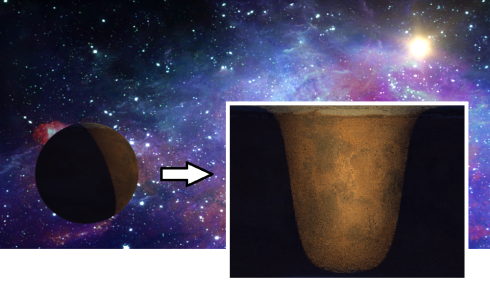

For each sample, the camera calculates the geometry position and normal then generates a ray that points towards it, using the same direction as the normal, from a distance of the configured kernel’s ray epsilon. Once calculated, the ray is traced in the same way as it would usually do with other types of camera. Figure 1 shows how lighting is baked onto a model of a planet.

Figure 1: Lighting is baked onto a model of a planet using a texture baking camera

Mesh Requirements for Baking

In order for a mesh to be used for texture baking it should be setup to fulfill the following requirements:

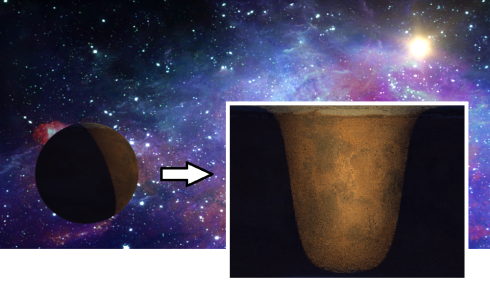

Figure 2: Overlapping UVs as shown in Maya's UV editor

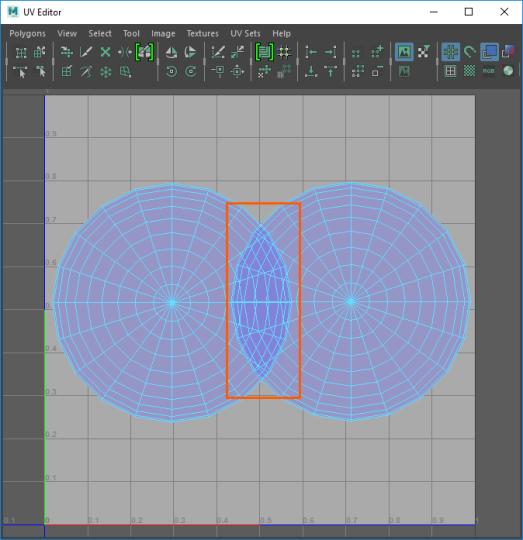

Setting up a Texture Baking camera

The simplest way to start is to create a copy of the scene's Render Target node and switch its camera to a baking camera (Figure 3).

Figure 3: Set the Camera type to Baking Camera

Baking Group ID

Specifies which baking group should be baked. By default all objects belong to the default baking group number 1. New baking groups can be arranged by making use of object layers or object layer maps similar to the way render layers work.

UV set

Determines which UV set to use for baking.

Revert Baking

If checked, the camera directions are flipped. This allows the mesh to be used to render the rest of the scene.

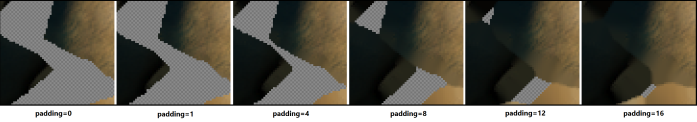

Padding

Padding extends the colors of the texture beyond the borders of the UV shells which helps to avoid the appearance of black lines on the model when the baked textures are mapped back to the surface later on.

The padding size is specified in pixels. The default padding size is set to 4 pixels. Optionally, an edge noise tolerance can be specified, which allows removing hot pixels appearing near the edge of the UV geometry. Values close to 1 do not remove any hot pixels while those closer to 0 will try to remove them all (Figure 4).

Figure 4: A comparison of different padding settings

UV Region

Specifies the area that the baking camera takes into account. This can be used to pan and zoom the camera in case your UV geometry is not within the [0,0]->[1,1] region.

Use Baking Position

If a baking position is used, camera rays will be traced from the specified coordinates in world space instead of using the mesh surface as reference. This is useful when baking position-dependent artifacts such as the ones produced by glossy or specular materials.

Baking Groups

In order to tell the baking camera which geometry to bake, the geometry should be connected to the baking render target and, in the case of having multiple objects and baking groups, the right baking group ID should be selected in the baking camera. For example if you wanted to bake the lighting of a room into the textures for the walls (provided the walls do not have overlapping UVs) you would set the camera's Baking Group ID to 2 and the Baking Group ID of each of the walls to 2 as well. Then you can render by selecting the Baking render target node and save the resulting image. Then if you wanted a separate texture for the floor you would set the Baking Group ID of the floor to 3 and the Baking Group ID of the camera to 3 as well and then render and so on until all the textures for the items in the room have been baked. Each time you bake a texture you would save out the image and then use the image as part of a texture map for the object as part of a shading network.

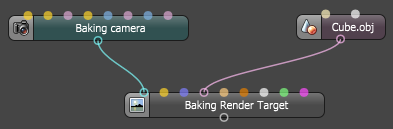

The example below illustrates the a minimal baking configuration node graph:

Figure 5: A basic texture baking arrangement

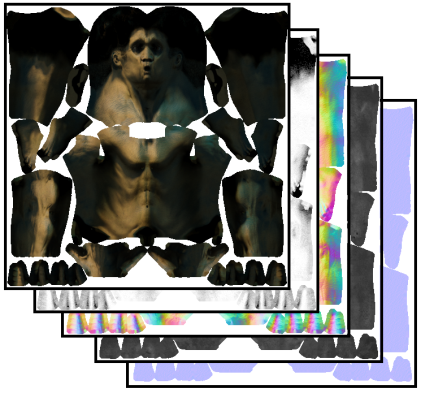

Note that render layers, passes, imager settings, etc. can be used in the same fashion as with other types of cameras, allowing extracting lighting and material information (Figure 6).

Figure 6: Any of the Octane render passes can be baked into textures

Baking Tips