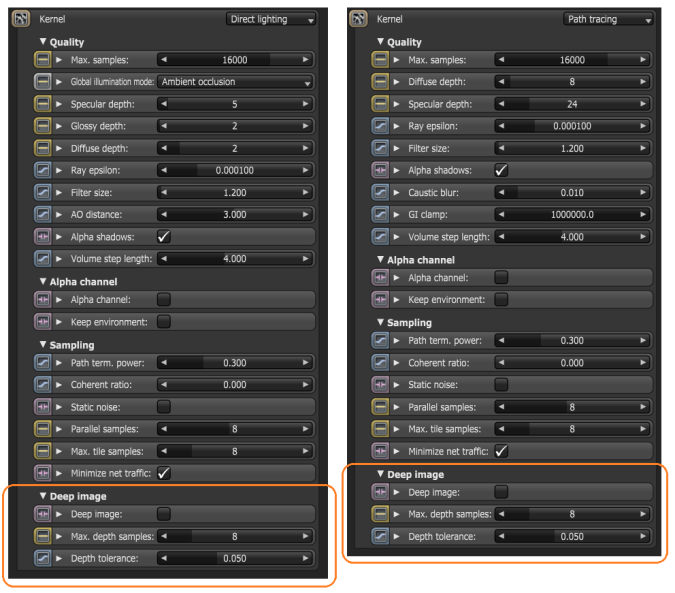

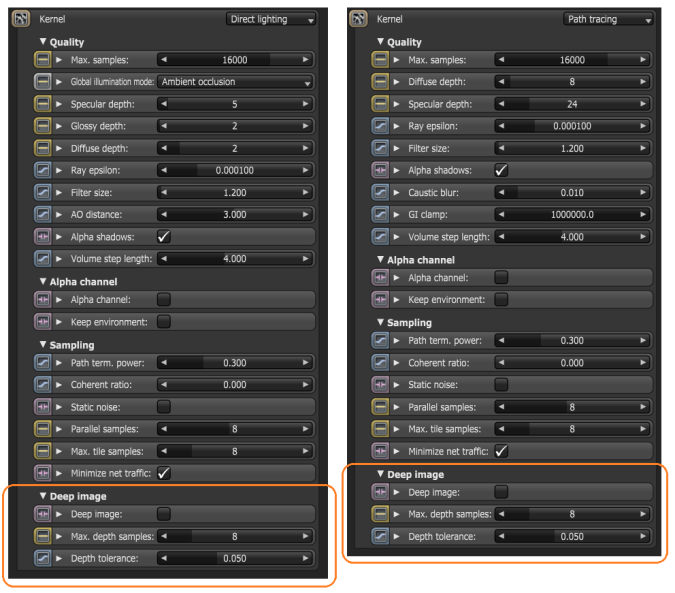

Deep Image Rendering is enabled via the Kernel node and is only supported for the Path Tracing and Direct Lighting kernels. Deep image can be enabled by checking the Deep image checkbox:

For a typical scene, thousands of samples per pixel will be rendered by the GPUThe GPU is responsible for displaying graphical elements on a computer display. The GPU plays a key role in the Octane rendering process as the CUDA cores are utilized during the rendering process., however, there is only a limited amount of VRAM. It is therefore necessary to be able to manage the number of samples stored. Two parameters are provided for this purpose:

Calculation of the Deep Bin Distribution

The maximum number of samples per deep pixel is 32, but don't worry we don't throw away all the other samples. When we start rendering we collect a number of seed samples which is a multiple of "max. depth samples". With these seed samples we calculate a deep bin distribution, which is a good set of bins characterizing the various depth depths of the samples of a pixel. There is an upper limit of 32 bins and the bins are non-overlapping. When we render thousands of samples, each sample that overlaps with a bin is accumulated into that bin. Until this distribution has been created you can't save the render result and the option "deep image" in the save image drop down is disabled.

Limitations

Using deep bins is only an approximation and there are limitations to this approach. When rendering deep volumes (deep meaning a large Z extend), it might be that there aren't enough bins to represent this volume all the way to the end. What happens is that the volume will be cut off in the back. You can clearly see this if you would display the deep pixels as a point cloud in Nuke. You can still use this volume for compositing but only up to where the first pixel is cut-off. If there aren't enough bins for all visible surfaces, some surfaces can be invisible in some pixels. This situation is more problematic and the best option is to re-render the scene with a bigger upper limit for the deep samples.

A word of warning: After the deep bin distribution has been created it needs to be uploaded onto the devices for the whole render film, i.e. even with tiled rendering deep image rendering can use a lot of VRAM so don't be surprised if the devices fail when starting the render. Likely, the amount of buffers required on the device can be too big for the configuration (check to log to make sure). The only thing you can do here is reduce the maximum deep samples or the resolution.

Here is an example project and a deep OpenEXR file rendered with it: