The goal of deep image rendering is to improve the compositing workflow by storing Z-depth with samples. It works best in scenarios where traditional compositing fails, like masking out overlapping objects, working with images that have depth-of-field or motion blur, or compositing footage in rendered volumes. The disadvantage to this approach is the large amounts of memory required to render and store deep images.

You can read a more thorough explanation in "The art of deep compositing" (https://www.fxguide.com/featured/the-art-of-deep-compositing/), "Interpreting OpenEXR Deep Pixels" and "Theory of OpenEXR Deep Samples".

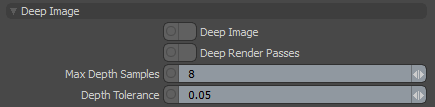

Deep ImageRenders frames with multiple depth samples in addition to typical color and opacity channels. settings can be accessed from the Render Toolbar > Kernel Button > Kernel tab. These settings are not available in the PMC the kernel.

Deep Image - Enables deep image rendering. Only the Path Tracing and Direct Lighting kernels support deep image rendering.

Deep Render PassesRender passes allow a rendered frame to be further broken down beyond the capabilities of Render Layers. Render Passes vary among render engines but typically they allow an image to be separated into its fundamental visual components such as diffuse, ambient, specular, etc.. - When enabled, all enabled Render Passes are written to the deep pixel channels. By default, only the Beauty Pass is written.

Note: Enabling this feature can use a lot of VRAM if you are rendering a large image.

Max Depth Samples - Specifies an upper limit for the number of deep samples stored per pixel.

Depth Tolerance - Merges the depth samples whose relative depth difference falls below this value.

The maximum number of samples per deep pixel is 32, but we don't throw away all the other samples. When we start rendering, we collect a number of seed samples, which is a multiple of Max. Depth Samples. With these seed samples, we calculate a deep bin distribution, which is a good set of bins characterizing the various depth depths of the pixel's samples. There is an upper limit of 32 bins, and the bins are non-overlapping. When we render thousands of samples, each sample that overlaps with a bin is accumulated into that bin. You can't save the render result until this distribution is created.

Using deep bins is just an approximation, and there are limitations to this approach. When rendering deep volumes (meaning a large Z extend), there might not be enough bins to represent this volume all the way to the end, which cuts the volume off in the back. You can see this if you display the deep pixels as a point cloud in Nuke®. You can still use this volume for compositing, but up to where the first pixel is cut off. If there aren't enough bins for all visible surfaces, some surfaces can be invisible in some pixels. This situation is more problematic, and the best option is to re-render the scene with a bigger upper-limit for the deep samples.

After creating the deep bin distribution, you need to upload it onto the devices for the whole render film. Even with tiled rendering, deep image rendering uses a lot of VRAM, so don't be surprised if the devices fail when starting the render. The amount of buffers required on the device can be too big for the configuration - check the log to make sure. The only thing you can do here is reduce the Max. Depth Samples parameter or the resolution.